Introduction

A train locomotive acts as the powerhouse to either push forward or pull onward the cars that make up the rest of the train. It is the engine, the driving force, and the catalyst for advancement. The European Union’s Artificial Intelligence Act was ratified and enacted in 2024 and will initiate steps in its own implementation over the course of 3 years until 2027. Like a train locomotive, this piece of legislation will fuel the new frontier of regulation and governance for Artificial Intelligence (AI) across the world. This article’s primary endeavor is to keep regulated businesses and platforms that utilize AI systems of any type aware of the rules and regulations pertaining to their operations that will apply now and in the near future.

The legislature and its formal updates and additions can be reviewed in the official online text, and a PDF version last updated December 7th, 2024 can be downloaded here. In short, the EU’s AI Act, which I will refer to as the Act from now on, is a well segmented legal document detailing the compliance requirements for AI systems that affect EU citizens. These systems may or may not be located within the European Union’s borders. Individuals affected can have multiple different roles in the implementation and usage of the AI systems. And these systems themselves are classified in different regards which lead to varying compliance requirements based on their potential impact. This article will answer many questions that might be held by everyone, from simply interested parties who like being informed, to Chief Information Security Officers (CISOs) of international brands. It will not target any one specific AI system, company, or technological role in the implementation of AI systems. This article will also not be all encompassing, as the PDF of the legislation mentioned earlier is 144 pages long. However, no matter how you may possibly be impacted, I will provide the method by which you can go about seeking additional information throughout the article. Now, it is time to depart on this expedition of understanding in this modern era, all aboard!

Initial insights

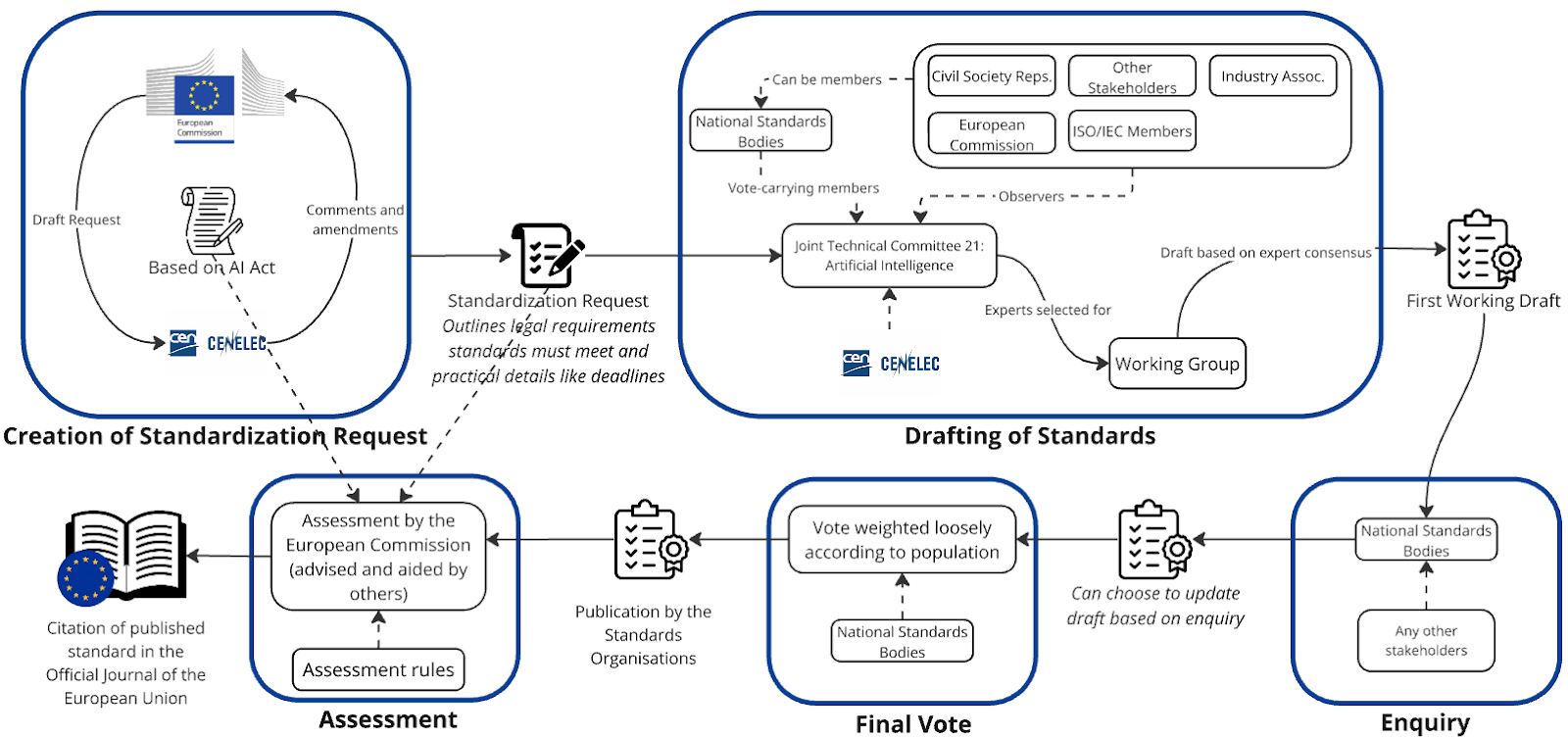

The Act is a set of “harmonized rules on artificial intelligence,” as stated in the header of the legislation. That is all it is. The European Commission has already clarified that official standards to support the Act will take time to finalize, but it has clearly outlined the steps to do so and timeline here.

That’s not to say it isn’t law. The Act clearly defines itself as a legal regulatory framework “needed to foster the development, use, and uptake of AI in the internal market that at the same time meets a high level of protection of public interests, such as health and safety and the protection of fundamental rights, including democracy, the rule of law and environmental protection as recognized and protected by Union law.” I would like to note that the Act does not supersede in any regard previously established legislation such as the General Data Protection Regulation and the EU’s Market Surveillance Regulation. The Act specifically affirms the safeguards implemented by these prior regulations and makes no effort in redefining how they are implemented. Simply put, the Act does not discuss any rules or implementations with regards to data of European citizens. The Act specifies how all of these legal frameworks work in tandem to uphold health, safety, privacy, and the rule of law surrounding European Union citizens.

Now, onto AI systems.

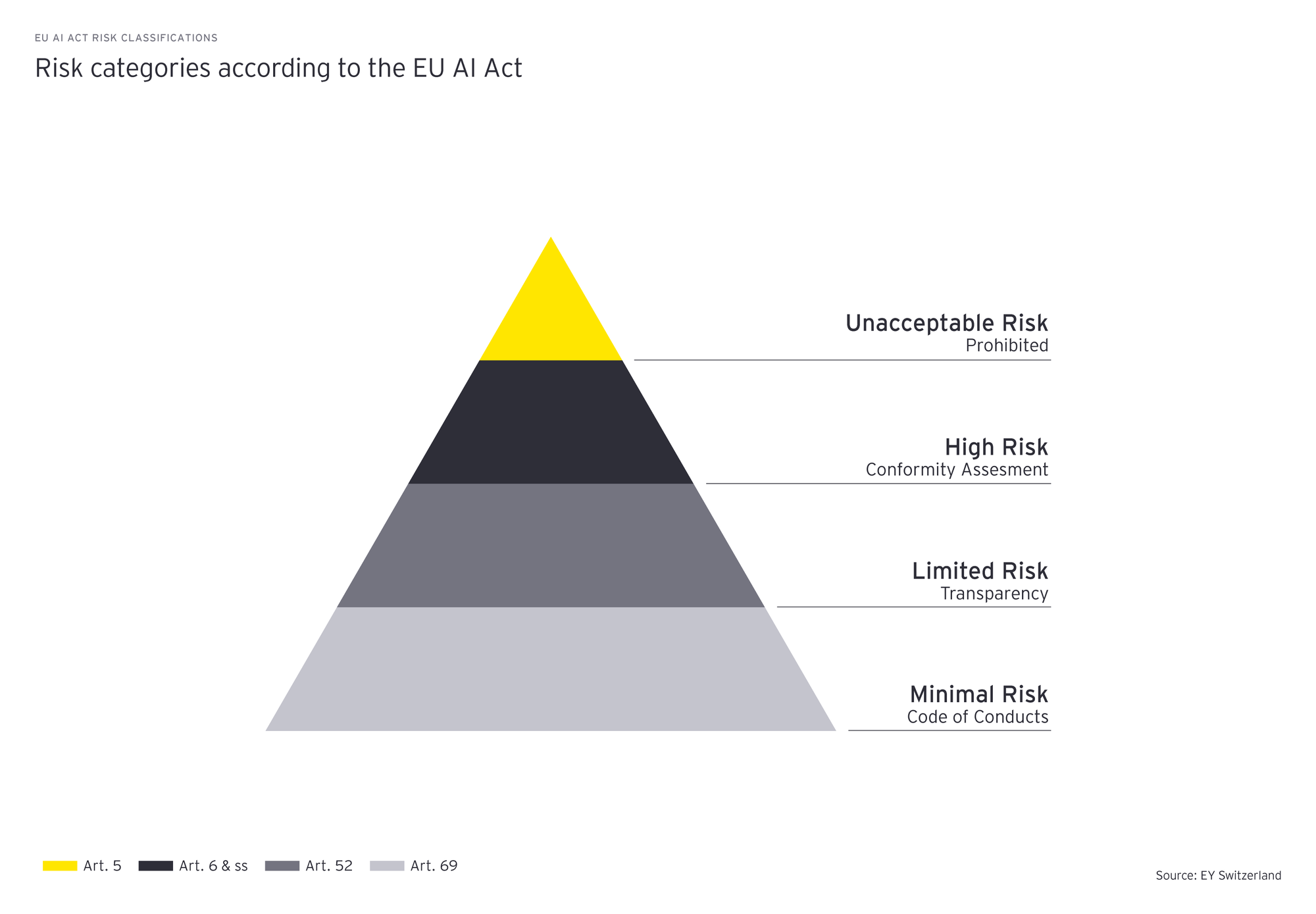

The Act classifies Artificial Intelligence systems into multiclassful designations. Firstly is the type of AI. There are 2: standard AI systems that perform specifically designated tasks, or general-purpose AI (GPAI) models that are provided as a service to perform variable tasks. Then there are the 4 classifications of risk to these AI systems and models: Prohibited AI, High Risk, Lower Risk, and No Risk. Then there are 2 classifications that can be assigned to the GPAI models: regular models, and models with systemic risk. Lastly, when regarding each of these AI systems and models, those individuals or groups impacted by these classifications are impacted differently depending upon their own categorization which is designated by the nature of their role with regards to the AI system(s). These categorizations and their definitions are as follows:

- A provider “means a natural or legal person, public authority, agency or other body that develops an AI system or a general-purpose AI model or that has an AI system or a general-purpose AI model developed and places it on the market or puts the AI system into service under its own name or trademark, whether for payment or free of charge”

- A deployer “means a natural or legal person, public authority, agency or other body using an AI system under its authority except where the AI system is used in the course of a personal non-professional activity”

- An authorized representative “means a natural or legal person located or established in the Union who has received and accepted a written mandate from a provider of an AI system or a general-purpose AI model to, respectively, perform and carry out on its behalf the obligations and procedures established by this Regulation”

- An importer “means a natural or legal person located or established in the Union that places on the market an AI system that bears the name or trademark of a natural or legal person established in a third country”

- A distributor “means a natural or legal person in the supply chain, other than the provider or the importer, that makes an AI system available on the Union market”

- An operator “means a provider, product manufacturer, deployer, authorised representative, importer, or distributor”

Moving forward I will now refer to all individuals, groups, corporations or conglomerates, with a responsibility assigned to them as designated by these defined roles, as Operators unless otherwise noted. Now each of these roles is provided a certain set of obligations that must be upheld within the legislation. I will not be presenting all of those responsibilities. However! The European Commission has kindly made available a third-party developed “calculator” to let Operators determine their responsibilities by simply answering a series of questions. The tool is called the EU AI Act Compliance Checker and is located here. The end result is a brief summary of where in the risk assessment your systems fall (i.e. High-risk exception) and provides which numbered articles in the Act you are obliged to comply with. It is very handy although not an incredibly comprehensive tool. I recommend reviewing the available PDF version before playing around with the actual questionnaire because it will seem limited in its responses at first. (I believe the form itself is slightly bugged and choosing certain answers returns an immediate classification rather than designated follow up questions.) It is still useful though.

EY — The EU AI Act: what it means for your business

Generally speaking, certain measured milestones must be met in order for an AI system or GPAI to be classified as Prohibited or High-risk. That is to say, the obligations listed throughout the Act apply based more upon one’s associated role rather than the classification of AI systems they implement. For instance, the GPAI Code of Practice, which can be found here, lists how to oblige by the Act. Alongside the Code of Practice are the “Guidelines on the scope of obligations for providers of general-purpose AI models under the AI Act” which can be found here. That resource of course pertains to designated providers of AI systems and models. All of these resources and more, including the original document of course, make absolutely clear all of the following:

- What are the characteristics of a Prohibited AI?

- What are the characteristics of a High-risk AI?

- What do the specific Operators of AI need to do in order to uphold the Act?

- When does a Lower-risk AI become a High-risk AI?

- What is the definition of a GPAI with systemic risk? In fact, this is the overview of guidelines for GPAI models.

- What exceptions are there? (i.e. Open Source AI Models)

- How does a Deployer turn into a Provider? In other words when does one type of Operator acquire the role or transfer into the role of a new type of Operator? As an Operator of AI can have multiple roles or change between one and the other.

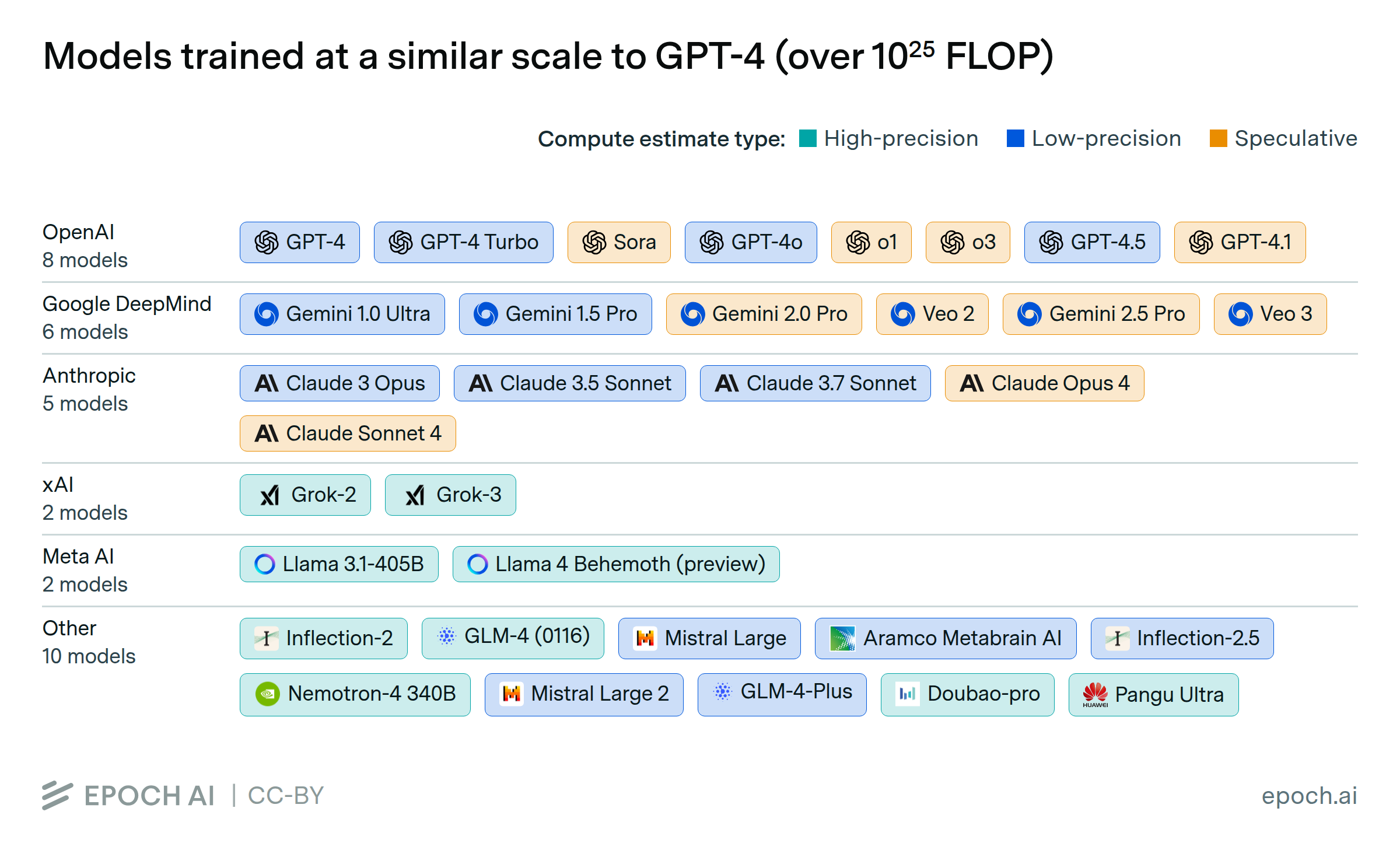

I can assure you that although I could list all of the profoundly deterministic data and definitions provided by the European parliament and European Commission for the Union, it is best to gather information straight from the source if you want to be as serious and secure in your operations as possible. In fact, they even offer “helper” websites. Many of which I have admittedly already provided for you. There are numerous articles out there by technology and law firms that do their best to summarize the information as well. They list the transitory FLOP ratio that determines when a downstream user becomes the new Provider for a given GPAI model. With or without systemic risk. All of this information will never pertain to any one system, model, individual, or company at a given time. It is a critical venture that with the aid of an “Authorized Representative” or otherwise, efforts are taken for all AI Operators to uphold the regulations provided and maintained by the Act.

Epoch — models over 10²⁵ FLOP (large-scale)

Now, onto the primary purpose of this article. I have given my initial insights into the Act to the most comprehensive degree I can without running off at the mouth with the absolute mass of information that exists within the entire legislative document. What I will do however is review exactly what businesses (more specifically SMEs), local to the EU or not, can expect to start performing and including in regular operations in order to uphold these new legal regulations with ease.

Business impact

From sandboxes to sanctions, small and medium enterprises that are deemed one of the operative roles related to AI (i.e. Provider), have to account for a multitude of criteria that will befall them should they come to be audited by the governing bodies established in the Act.

I want to include this direct link to “Chapter VI: Measures in Support of Innovation, Article 62: Measures for Providers and Deployers, in Particular SMEs, including Start-Ups”. The title is pretty self explanatory but a significant note is the referenced “Date of Entry into Force” on August 2nd, 2026. I have yet to explicitly lay out the 3 year timeline between 2024 and 2027, which details the initial ratification up until final implementation of the Act. The Dates of Entry into Force however, designate by what date Operators will start being held responsible for the obligations required of them under the Act. The most significant dates of Entry into Force are:

- February 2nd, 2025 for Chapters 1 and 2 of regulation in the Act

- August 2nd, 2025 for Chapter 3 Section 4, Chapter 5, Chapters 7 and 12, and article 78

- August 2nd, 2027 for Article 6 and all other corresponding obligations not already entered into force in the regulation

Of course, August 2nd, 2026 is a separately listed date of entry as specified in Chapter 6 Article 57. With that in mind, let’s discuss what should be considered necessary to understand and participate in prior to August 2nd of this year, about 6 months from the time of this publication. And I mention Chapter 6 Article 57 because that is the section “AI Regulatory Sandboxes”. We cannot begin without first mentioning sandboxes. An AI Regulatory Sandbox is defined as a “controlled framework set up by a competent authority which offers providers or prospective providers of AI systems the possibility to develop, train, validate and test, where appropriate in real-world conditions, an innovative AI system, pursuant to a sandbox plan for a limited time under regulatory supervision.” For context, a sandbox plan “means a document agreed between the participating provider and the competent authority describing the objectives, conditions, timeframe, methodology and requirements for the activities carried out within the sandbox.”

I would like to frame the discussion on sandboxes in the scenario of an amusement park. Now, in order for your AI systems or models to be placed on the market (or for you to go on a ride at the park) you must first be approved by the regulating bodies defined in the Act (the ride operator/ticket collector). However, in order to be eligible to join the ride, you must wait in line and go through the extensive process it takes to get to the front and be approved. However, there is a shortcut, the Fast Pass! AI Regulatory Sandboxes are Member-State managed environments run and paid for by governing bodies with professionals that ensure the AI developed and trained within the sandbox meet all lawful, health, safety, and privacy regulations. Both in the Act and other legislation. If an AI is developed or trained and consequently monitored and reported to the governing bodies within the sandbox, their time to market and therefore live service is exponentially increased. In fact, the Act states that providers based in or that have an office located within the Union get “priority access to the AI regulatory sandboxes.” Now notably, and explicitly stated in the Act, this is not a necessary path. It simply acts as a streamlined project that hopes to assist in innovative AI development for SMEs and companies that want to prioritize developing a product that upholds all legislative obligations and a speedy deployment. Or in other words, a Fast Pass.

The Act also specifies that Member States should appropriate designated “channels for communication with SMEs, including start-ups, deployers, other innovators and, as appropriate, local public authorities, to support SMEs throughout their development path by providing guidance and responding to queries about the implementation of this Regulation.” Yes! You read that correctly! It is clear that the European Commission is prioritizing the assistance of SMEs in the development of innovative AI systems and models for deployment in the EU. Oh, did I mention the use and occupation of these sandboxes is free? See Article 58 Section 2 point (d).

Which brings me to a great point. This EU AI Act is not trying to be controlling. It is not a one stop shop to figure out what implemented AI systems and models should and shouldn’t do. It is a guide. It is lawfully implemented rules to assist in developing AI that will substantially impact society without harming it.

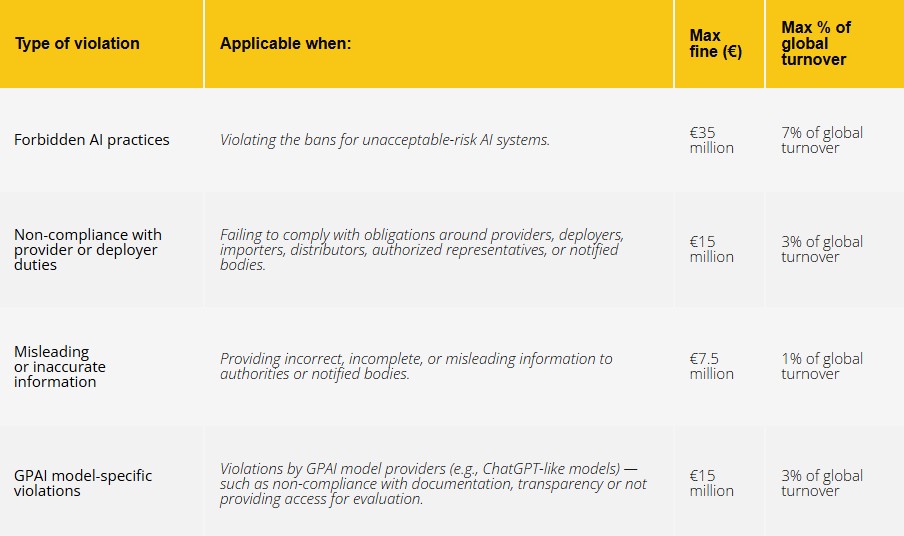

As a business of some size either in the EU or abroad, there are a multitude of steps that should be taken to ensure cooperation and compliance. It should be duly noted there are non-compliance penalties that accompany avoidance of the regulation. These primarily come in the form of pretty significant fines depending on severity and revenue. Also unquestionably is Prohibited AI which will be deemed necessary to remove from the Market. In order to prevent these sorts of settlements however, progressive steps can be taken.

First is inventory. There should be a comprehensive resource detailing specifically how many systems, what kinds of systems, where those systems are deployed, and systems in development along with more. This inventory will be an easy indicator as to the legal obligations that will need to be upheld for each individual system or model. That is because all classification and role designation should be performed. By the end of this step you should know which kind of regulatory Operator role you are for each AI system or model in your repertoire as well as their classifications. This means, if you designate a Prohibited AI in your control, some serious conversations should be had.

Second, establishing correspondence. This is especially necessary for non-European Union parties. This will include getting in touch with the governing body that maintains the AI Regulatory Sandbox if desired, as well as acquiring an “Authorized Representative” if required.

Third will be AI literacy. Of course, you will be in communication with assigned members of the regulating bodies. It is important all staff and handling personnel associated with the AI are clearly knowledgeable, experienced, and trained on the systems and models. The definition and expectation of AI literacy for Providers and Deployers can be found in Chapter 1 Article 4. The argument can be made that human misunderstanding of these systems and models is a systemic risk in itself. Poorly trained staff can misclassify the systems or underestimate obligations depending on the classifications. They might fail to recognize changes in the AI as well that would lead to Operator role updates. Therefore AI literacy should be a genuine control implementation.

Fourth, you will require an ongoing strategy. Set up periodic risk assessments for your categorized systems. The primary methodology that the Act relies on is self-assessment and a sort of honor system before all else. This is where a key strategy comes into play. In theory, your systems could progress from Lower Risk to High Risk. This will require a shift in compliance methods. Additionally, if you adopt a GPAI with systemic risk more accommodations need to be made to maintain your obligations to the Act.

Software Improvement Group — EU AI Act summary

These are just my generic suggestions to businesses in need of compliance considerations to develop a plan before the Date of Entry into Force on August 2nd, 2026. As per the Code of Practice however, which itself is not legally binding, there is a provided list of obligations specifically for GPAI model Providers:

- “Provision of technical documentation to the AI Office and National Competent Authorities”

- “Provision of relevant information to providers downstream that seek to integrate models into their AI or GPAI system”

- “Summaries of training data used”

- “Policies for complying with existing Union copyright law”

Hopefully this short list gives you an idea of the kind of expectations the European Commission holds for affected parties. Even more specifically, to showcase the difference in compliance obligations depending on categorization, here is a list of additional obligations for GPAI models with systemic risk:

- “State of the art model evaluations”

- “Risk assessment and mitigation”

- “Serious incident reporting, including corrective measures”

- “Adequate cybersecurity protection”

What to keep in mind

That last bullet point brings me to my final discussion on the actual text itself. What does this mean in terms of security? What should be looked out for? What are possible security repercussions? The Act specifies that to “ensure a level of cybersecurity appropriate to the risks, suitable measures, such as security controls, should therefore be taken by the providers of high-risk AI systems, also taking into account as appropriate the underlying ICT infrastructure.” This should be a no-brainer. We’re dealing with systems that within a relatively scoped implementation are given the keys to the kingdom basically. But I think it's more than just a question of “how do we protect and secure the AI?” It is a question of how we protect the companies and individuals themselves. Going back to the sandboxes to make my point, in a practical sense, there is totally invasive disclosure required for the governing bodies to reasonably affirm the regulated system in their sandbox environment prior to deployment to market. What this means for extended applications of data privacy and intellectual property I don’t really know. Maybe that isn’t a worrisome consideration for some. Now admittedly when it comes to the practical requirements in terms of cybersecurity for these systems and models, the Act does an excellent job of summarizing what specifically should be done or looked at. Outside of Chapter 3 Section 2, Article 15 “Accuracy, robustness and cybersecurity”, there are quite a few recitals in the beginning Preamble that discuss the topic and provide guidelines on actions to take as a provider or deployer. I do believe it is too early to consider what the final repercussions may be in a theoretical case where security implementations of the Act result in broken trust, or a lack of truly secure defenses.

One severe consideration to take account of though is how compliance itself will become a new attack surface for all Operators. Documentation and risk assessments, incident reports, training data, along with various other technical disclosures create intelligence artifacts whilst being regulatory necessities. The defensive assumption should be that adversaries will exploit these if improperly secured. This will require Operators to prioritize access control, encryption, audit logging, and serious defense in depth. Especially when it comes to the 3rd party sharing I mentioned with regards to governing bodies and the sandbox authorities.

Where this leads us

So what is the plan for the future now that a new legislative declaration has set a precedent. Well, only time will tell. Reminiscing on the effect the GDPR had on the global market, I’m sure it will be a similar case in today’s society. I am of course referring to the “Brussels Effect”. Again, I am releasing this article before total implementation has even taken effect. There will probably be no absolute end to the legislation. There are up to 13 Annexes now and official standards are in the process of being established. Once that becomes the case I think a whole new discussion will sprout.

The EU AI Act will reshape model lifecycles, ML DevOps pipelines, risk governance frameworks, and inter-Operator contracts. Over time, these compliance-aligned frameworks and architectures will become the default global ideology surrounding AI. Clever anticipation and establishing EU compliance from the offset will set up companies to operate globally without hindrance as the impact of the Act and other legislation takes effect in due time.

Conclusion

This seems to be a matter of who is on board. In a digested sense, the outcomes the European Union wants to see from this legislation hinges on the philosophy of groupthink. Now I am sure the many professionals that contributed to this genuinely impressive and comprehensive piece of legislation recoiled at my suggestion that success hinges on groupthink. But I don’t believe I am entirely wrong. The Act seems the safe choice when it comes to deciding how to approach regulation of AI on a global scale instead of trying to set a new precedent. I am sure many non-European Union governing bodies will come to their own conclusions and adopt a likewise legislature. But in the grand scheme of this new era, Artificial Intelligence will absolutely dominate everything in time. We are all braving a frontier that has never been explored. Whether we end up in the same destination of a harmonious AI driven society or not will be determined by which trains we take to get there, and whether we ride them responsibly.

Sources

Primary and official

- Artificial Intelligence Act hub (artificialintelligenceact.eu)

- EUR-Lex — online document

- PDF version (Official Journal)

- EU AI Act Compliance Checker

- Overview of GPAI models

- Code of Practice (introduction)

- Recital 83

- High level summary

- Small business’ guide to the AI Act

- AI regulatory sandboxes (Article 57)

- SME suggested measures (Article 62)

- Recital 143

- Standardization

- GPAI providers guidelines (Commission)

- Model sizes aid (Epoch)

- EU nation state implementation guide

Analysis and reference

- KPMG analysis — how the EU AI Act affects US-based companies

- AI legislation tracker (American Action Forum)

- Informatica — EU AI Act global impact

- Greenberg Traurig — business implications and compliance strategies

- Baker Sterchi — what US companies need to know

- EY — what it means for your business

- Software Improvement Group — EU AI Act summary

- IBM Think — EU AI Act